A declassified wartime guide to disruption offers an unexpected perspective on how modern organisations manage complexity, scrutiny and decision-making

On 17 January 1944, General William Donovan, head of the Office of Strategic Services (OSS) - the wartime intelligence agency that later became the CIA - authorised a document that remains one of the more unusual artefacts from the Second World War.

It wasn’t a conventional weapon, but a guide to influencing behaviour.

The ‘Simple Sabotage Field Manual’ was designed for the “citizen-saboteur” - the ordinary person living under occupation who wanted to resist but lacked access to traditional means.

Its effectiveness lay in its subtlety; it didn’t ask people to blow up buildings; it asked them to be slightly, consistently, and “plausibly” inefficient.

Today, nearly 20-years after the manual’s declassification, it offers an interesting lens through which to explore modern governance - not because modern officials are saboteurs, but because it points to a broader idea - that the line between “rigorous process” and systemic delay may be thinner than we think.

The Art of Simple Sabotage

The OSS manual makes a clear distinction between “physical sabotage” and what it calls the “human element”. While it does include references to damaging equipment, much of its focus is on disrupting administrative systems.

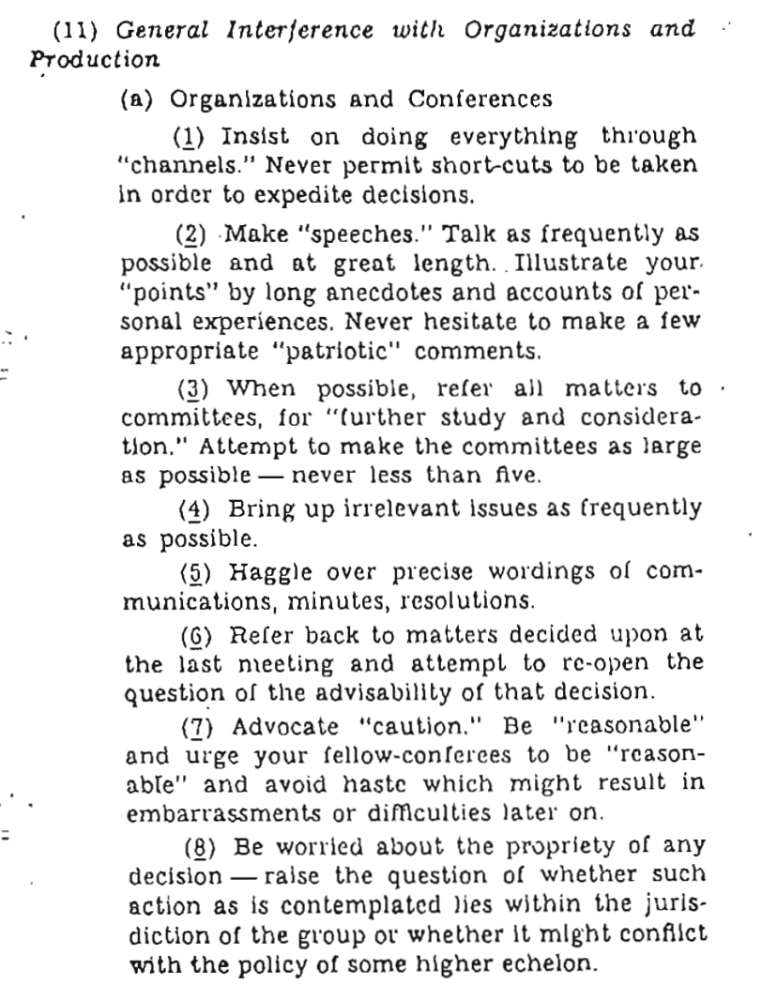

Its advice to office workers reads, in part, like a catalogue of behaviours that can slow organisations down:

- Insist on everything going through proper channels

- Refer matters to committees for “further study”

- Talk as frequently as possible including anecdotes of personal experiences

- Advocate extreme caution and warn against acting too quickly

In a wartime context, these actions were intended to undermine hostile systems.

However, these behaviours may feel familiar to those who have worked in large organisations - whether in the public or private sector.

The Intent Paradox

In 1944, these behaviours were “sabotage” because their intent was to destroy. In 2026, these same behaviours are often described as “best practice” because their intent is to protect.

Take the example of referring decisions to committees. In the OSS manual, this is framed as a way to delay or prevent action altogether.

But in modern democratic systems, committees are a core part of scrutiny, allowing proposals to be examined in detail before decisions are made.

The behaviour can appear similar - particularly in terms of delay - even if the intent is very different.

For instance, on the Isle of Man, decisions pass through Tynwald, the Island’s parliament, which includes the elected House of Keys and the Legislative Council, and Tynwald Court itself. Committees play a role in examining policy and legislation by helping to ensure that decisions are properly considered.

From one perspective, this could be seen as a necessary feature of a system that prioritises oversight. From another, it could be viewed as part of a broader trade-off between efficiency and accountability.

Former MHK and government minister David Cretney says that tension is familiar to many who have worked within political systems.

He told Manx Radio that while scrutiny and fact-based decision making are important, delays can sometimes become excessive.

“Things do take forever and you have to be persistent and keep raising matters if you’re not a member of the government”, he said.

“I think it’s important decisions are thought through properly and are fact-based so there will be a tension that emerges, but it becomes farcical the amount of time that some things can be discussed and make no progress.”

Mr Cretney added that one of the Island’s strengths has traditionally been its ability to act more quickly because of its political autonomy.

The “Plausible Excuse” and Culture of Risk

One of the more striking ideas in the manual is the concept of the “plausible excuse.”

It suggests that a saboteur should never appear deliberate - their actions should be indistinguishable from ordinary mistakes or excessive caution.

In a modern context, this idea resonates differently, because in complex or high-stakes areas such as public policy or infrastructure, the consequences of mistakes can be significant.

As a result, systems often place a strong emphasis on risk management.

Where the cost of error is high, caution can become the default, and additional layers of consultation or review may be introduced not to delay progress, but to reduce the likelihood of unintended outcomes.

In such cases the result can be similar: systems may slow down, even when the intentions to protect rather than hinder.

The Emergent System

Large systems often develop what are known as “emergent properties” - outcomes that are not explicitly designed, but arise from the interaction of many individual decisions and processes.

As layers of approval, oversight, and procedure build up over time, they can begin to shape how an organisation functions.

In some cases, this can lead to increased complexity, longer decision-making timelines, and greater administrative burden.

This doesn’t necessarily require poor intent. It may simply reflect how systems evolve as safeguards are added and responsibilities are distributed.

Former minister Phil Gawne, also Manx Radio’s political correspondent, argues that institutional complexity often develops incrementally rather than deliberately.

He says new layers of process are frequently introduced after failures or controversies. However, he believes systems becoming complex “often happens for the best of reasons”.

“Things go wrong, committees investigate what’s gone wrong and then recommendations are put forward.

“It’s very rare that a recommendation ever comes up that says the process is too clunky and needs streamlining. What typically happens is more rules are implemented.”

Mr Gawne says that while safeguards and accountability are important, additional process can create unintended consequences, slowing projects and increasing costs.

“There is a trade-off [between streamlining systems and accountability]. It’s reasonably well-known that government projects cost between 25 and 50 percent more than the private sector because it has to be a fair and transparent process in how government is spending public money, so more work has to be done.”

He also believes some political decisions can be slowed intentionally: “I know in Council of Ministers I’ve been in, this approach has been taken where we say ‘this is a really difficult problem, let’s just sit on it and let it time itself out’.”

However, others might argue that lengthy scrutiny and procedural safeguards are an unavoidable feature of democratic accountability, particularly when public money and long-term policy decisions are involved.

The Democratic Dilemma

Tynwald is recognised as the world’s oldest continuous parliament, and is preparing to mark 1,050 years of operation in 2029.

While the 1944 manual is a relatively recent document by comparison, questions about how decisions are considered, debated and implemented have long been part of all parliamentary systems, even as the structures and processes themselves have evolved over time.

And while the wartime manual was designed to weaken systems imposed on people, today it offers a way of examining the systems societies build for themselves.

In a world that moves faster than ever, the challenge for democracies - not just on the Isle of Man, but worldwide - remains one of balance. How do we ensure that safeguards act as vital protections for the public, rather than the very wrenches in the works that General Donovan once dreamt of?

Perhaps the real question is not whether modern governments resemble sabotage, but whether it’s possible to build systems that don’t.

Teresa Cope: Island's healthcare 'needs to look very different in 10 years' time'

Teresa Cope: Island's healthcare 'needs to look very different in 10 years' time'

Teresa Cope: Reaction as Manx Care CEO steps down

Teresa Cope: Reaction as Manx Care CEO steps down

Cope out: How did we get here?

Cope out: How did we get here?

The Efficiency Trap: Why AI isn't saving us time

The Efficiency Trap: Why AI isn't saving us time

"We think it's inhumane the way he was treated."

"We think it's inhumane the way he was treated."